- FACESHIFT PERCEPTION NEURON UPDATE

- FACESHIFT PERCEPTION NEURON FULL

- FACESHIFT PERCEPTION NEURON SOFTWARE

- FACESHIFT PERCEPTION NEURON CODE

- FACESHIFT PERCEPTION NEURON PROFESSIONAL

I think that would help other users a lot. Let me know if you had any sample BVH files that could be shared as part of this example repository. It makes the model model fit the data much better, but you will need to tweak this further. I have also ported the functionality from the official AnyBody model repository (based on xsens) which scales the model based on the BVH stick-figure. Then you should be able to load Subjects/PerceptionNeuron2/PerceptionNeuron2_Trial01/Main.any example. I still can't share any of the peception neuron file I have, so you need to rename one of your files to: "PerceptionNeuron2_Trial01.bvh" and put it in the BVH-files folder. Now, Subject 1 runs with the old file I had, and Subject 2 two uses your file.

FACESHIFT PERCEPTION NEURON UPDATE

WARNING(7) : Muscle.any(919) : pectoralis_major_clavicular_part_4.SPLine : Penetration of surface : CylSurf : Via-point 'O_pectoralis_major_clavicular_part_4' on 'SPLine' is located below the wrapping surface'CylSurfĮRROR(4) : AnyMocapModel.any(60) : InverseDynamicStudy.InverseDynamics : Muscle recruitment solver : solver aborted after maximum number of I have pushed and update which enables you to switch between the two different perception neuron file structures I have seen. WARNING(7) : Muscle.any(1254) : teres_major_3.SPLine : Penetration of surface : cyl : Via-point 'O_teres_major_3' on 'SPLine' is located below the wrapping surface'cyl NoitomVPS A Complete Virtual Production Solution for Under 50,000 Starting at 49,000 for a 5x7m capture space, NoitomVPS is a solution combining Perception Neuron Studio and hybrid tracking markers with optical cameras for.

Progressing to solve kinematic optimality conditions and hard constraints. NoitomVPS ONE MOCAP ECOSYSTEM Limitless Possibilities NoitomVPS combines inertial and positional trackers to deliver a one-of-a-kind hybrid motion capture experience. WARNING(6) : Interface.any(215) : GHRot : Close to singular position : Orientation close to Gimbal Lock, i.e., first and third axis of rotation being parallel So, When I run the anybody model, these warning and error occur. (When collecting data, sensors are located at the center of the forehead and shoulders.)

Markers of upper body(head, shoulders, spine) seem to be very far from each other.I mean, the shoulder position is too high. The inclination of the clavicle is steep.

FACESHIFT PERCEPTION NEURON CODE

You may use my motion data for code updates if you want. So the format of the data may have changed.

FACESHIFT PERCEPTION NEURON SOFTWARE

The software for perception neuron has been updated. You shouldn't happen to have a BVH file which I could be allowed to share as part the repository? If this is the new structure for Perception Neuron models I would be happy to update the example. Do a search/replace in the marker protocol file: Maybe Perception Neuron has changed their file structure over time? Are you using the newest perception neuron system? If so, I could easily change the example. Spine->Spine1->Spine2->Spine3->Neck->Head It mostly resemble the file "Data_v1_Char00.bvh" you had.īut the file structure seems to have changed slightly:Įspecially, my BVH file have the following: I tried to compare the Neuron Mocap BVH file I had with the ones you sent me. You can learn more about Faceshift on their beautiful new website, faceshift.Hi. In other news, Faceshift is showcasing their non-pro studio version which features accurate facial tracking, streamlined UX, synchronized audio, flexible retargeting, and Intel RealSense support.

FACESHIFT PERCEPTION NEURON PROFESSIONAL

According to Doug Griffin of Faceshift, this system works “as well or better” than their desktop version and will be used by professional motion capture studios. The markerless motion capture is made possible by tracking the depth of the face while simultaneously tracking every pixel individually and doing an optical flow over time. The small attached sensor shoots 60 frames per second of both depth and video.

The helmet is incredibly lightweight and features carbon arms that allow the user to fit the headset comfortably to their head.

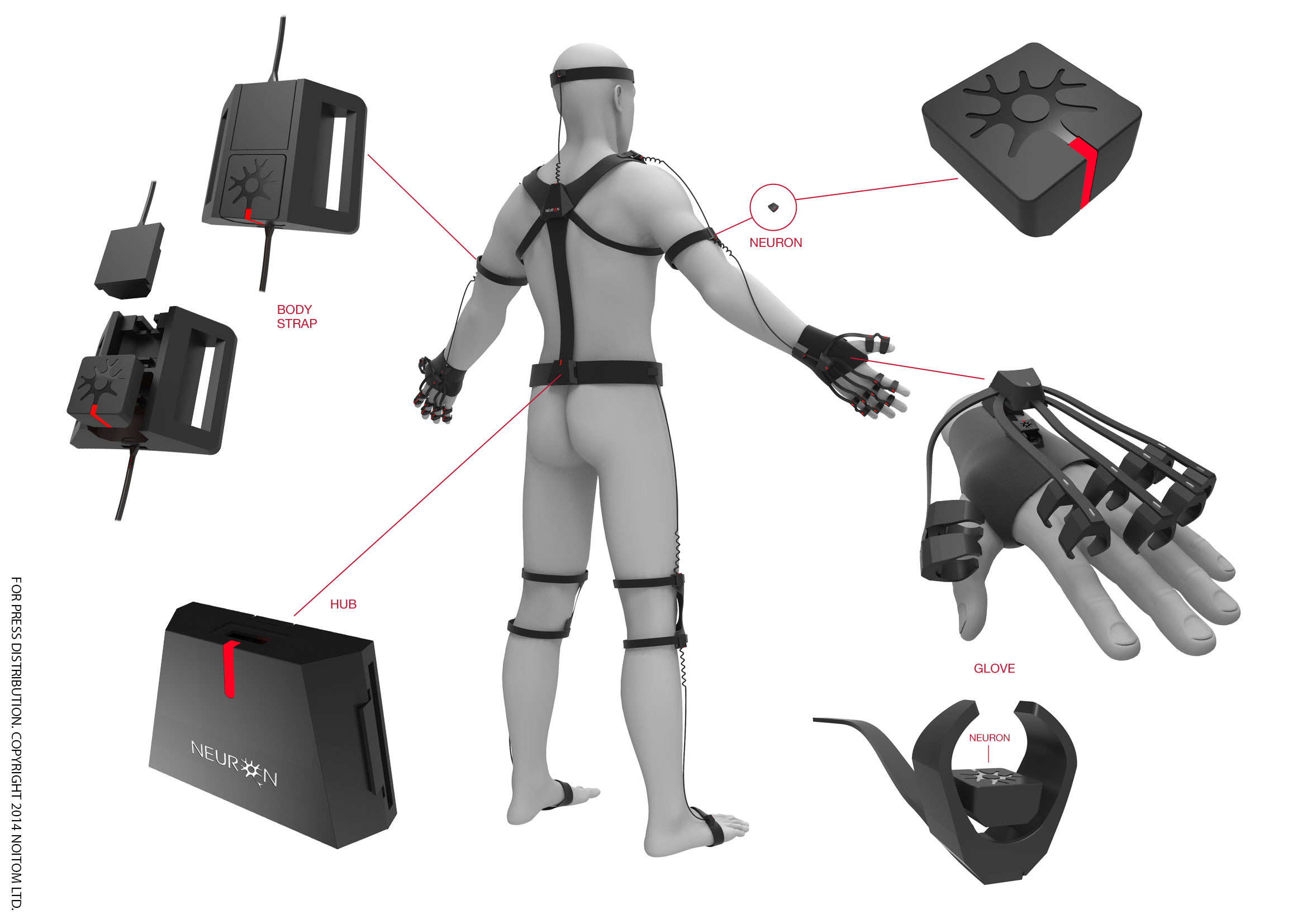

FACESHIFT PERCEPTION NEURON FULL

This enables the accurate capture of facial expressions during full body motion capture, which will allow content creators to capture life-like body and facial animations simultaneously, all while giving the actor the ability to move freely through space. This is being launched alongside their new Faceshift StudioPro software which boasts a killer set of features including wireless control, remote triggering, TimeCode support, batch processing, and realtime face tracking and targeting. Faceshift, an impressive markerless facial motion tracking and real-time character animation system has announced the release of a wireless facial tracking helmet, designed by Mocap Design, that can be used alongside third party full-body motion capture systems like Perception Neuron.